- Blog

- Home

- Handbrake Mac 32 Bit Download

- Download Trade Interceptor On Mac

- Ragdoll Masters Mac Full Download

- Twonky Media Server Download Mac

- Junos Vpn Client Download Mac

- Download Internet Browser For Mac

- Netflix Silverlight Download Mac Problem

- Download Chemdraw Free Trial Mac

- Skim Mac Os X Download

- Scan Download For Malware Mac

- How Download Chrome On Mac

- Tempat Download Aplikasi Mac Os

- Download Dropbox For Mac Chrome

- Password Corral For Mac Download

- Download Gopro Hero 4 Mac

- Instagram Download For Pc Mac

- Facade Download Mac Os Sierra

- Astro File Manager Download Mac

|

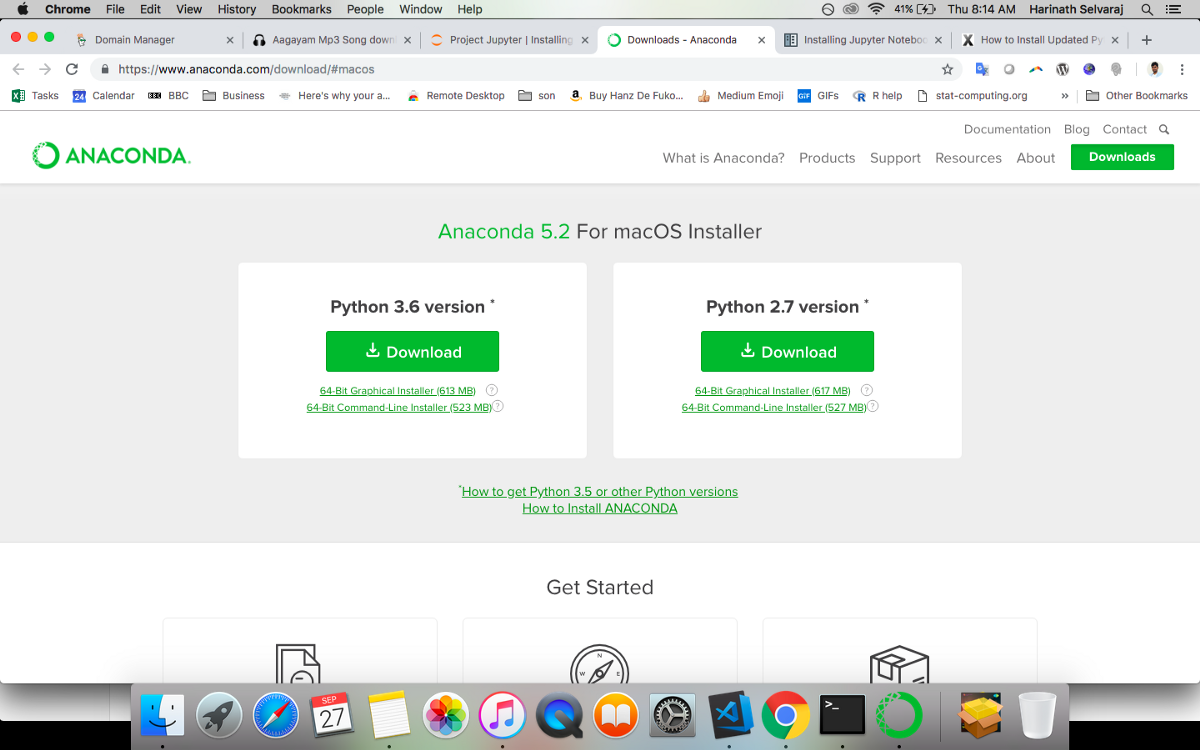

Anaconda Individual Edition is the world’s most popular Python distribution platform with over 20 million users worldwide. You can trust in our long-term commitment to supporting the Anaconda open-source ecosystem, the platform of choice for Python data science. Locate Anaconda. The first step you need to do is to locate the path of Anaconda. You will create a. May 26, 2020.

Upgrade to Python 3.x

Download and install Python

3.x. For this tutorial I have used 3.5.

Once you downloaded and run the installation app, Python 3 will be installed under:

The installer also adds the path for the above to your default path in

.bash_profile so that when you type:

Jupyter Notebook

on the command line, the system can find it. You'll know you've been successful if you see the Python interpreter launch.

Install pip

Fire up your Terminal and type:

Install PySpark on Mac

Next, we will edit our

.bash_profile so we can open a spark notebook in any directory. So fire up your Terminal and type in:

my

.bash_profile looks as follows:

The relevant stuff is:

The

PYSPARK_DRIVER_PYTHON parameter and the PYSPARK_DRIVER_PYTHON_OPTS parameter are used to launch the PySpark shell in Jupyter Notebook. The --master parameter is used for setting the master node address. Here we launch Spark locally on 2 cores for local testing.

Install Jupyter Notebook with pip

First, ensure that you have the latest pip; older versions may have trouble with some dependencies:

Then install the Jupyter Notebook using:

Thats it!

You can now run:

in the command line. A browser window should open with Jupyter Notebook running under http://localhost:8888/ https://yellowsilk944.weebly.com/blog/solidworks-mac-free-download-crack.

Configure Jupyter Notebook to show line numbers

Run

to get the Jupyter config directory. Mine is located under

/Users/lucas/.jupyter.Run:

Run:

to create a

custom directory (if does not already exist).Run:

Run:

and add:

You could add any javascript. It will be executed by the ipython notebook at load time.

Install a Java 9 Kernel

Install Java 9. Java home is then:

Install kulla.jar. I have installed it under

~/opt/.

Download the kernel. Again, I placed the entire

javakernel directory under ~/opt/.

This kernel expects two environment variables defined, which can be set in the kernel.json (described below):

So go ahead and edit

kernel.json in the kernel you have just download to look as follows: Reason 5 keygen mac download.

Run:

Run:

Run:

to copy the edited

kernel.json into the newly created java directory.

Install

gnureadline by running:

in the commoand line.

If all worked you should be able to run the kernel:

and see the following output:

Whether it’s for social science, marketing, business intelligence or something else, the number of times data analysis benefits from heavy duty parallelization is growing all the time.

Apache Spark is an awesome platform for big data analysis, so getting to know how it works and how to use it is probably a good idea. Setting up your own cluster, administering it etc. etc. is a bit of a hassle to just learn the basics though (although Amazon EMR or Databricks make that quite easy, and you can even build your own Raspberry Pi cluster if you want…), so getting Spark and Pyspark running on your local machine seems like a better idea. You can also use Spark with

R and Scala, among others, but I have no experience with how to set that up. So, we’ll stick to Pyspark in this guide.

While dipping my toes into the water I noticed that all the guides I could find online weren’t entirely transparent, so I’ve tried to compile the steps I actually did to get this up and running here. The original guides I’m working from are here, here and here.

Pre-requesites

Before we can actually install Spark and Pyspark, there are a few things that need to be present on your machine.

You need:

Installing the XCode Developer Tools

Installing homebrewDownload Jupyter Notebook For Mac

Installing Pipenv

If that doesn’t work for some reason, you can do the following:

Download Jupyter Notebook For Mac Free

This does a pip user install, which puts pipenv in your home directory. If pipenv isn’t available in your shell after installation, you need to add stuff to you

PATH. Here’s pipenv’s guide on how to do that.

Installing Java

To run, Spark needs Java installed on your system. It’s important that you do not install Java with

brew for uninteresting reasons.Just go here to download Java for your Mac and follow the instructions.

You can confirm Java is installed by typing

$ java --showversion in Terminal.

Installing Spark

With the pre-requisites in place, you can now install Apache Spark on your Mac.

Installing Pyspark

I recommend that you install Pyspark in your own virtual environment using

pipenv to keep things clean and separated.

Your

~/.bashrc or ~/.zshrc should now have a section that looks kinda like this:

Now you save the file, and source your Terminal:

To start Pyspark and open up Jupyter, you can simply run

$ pyspark. You only need to make sure you’re inside your pipenv environment. That means:

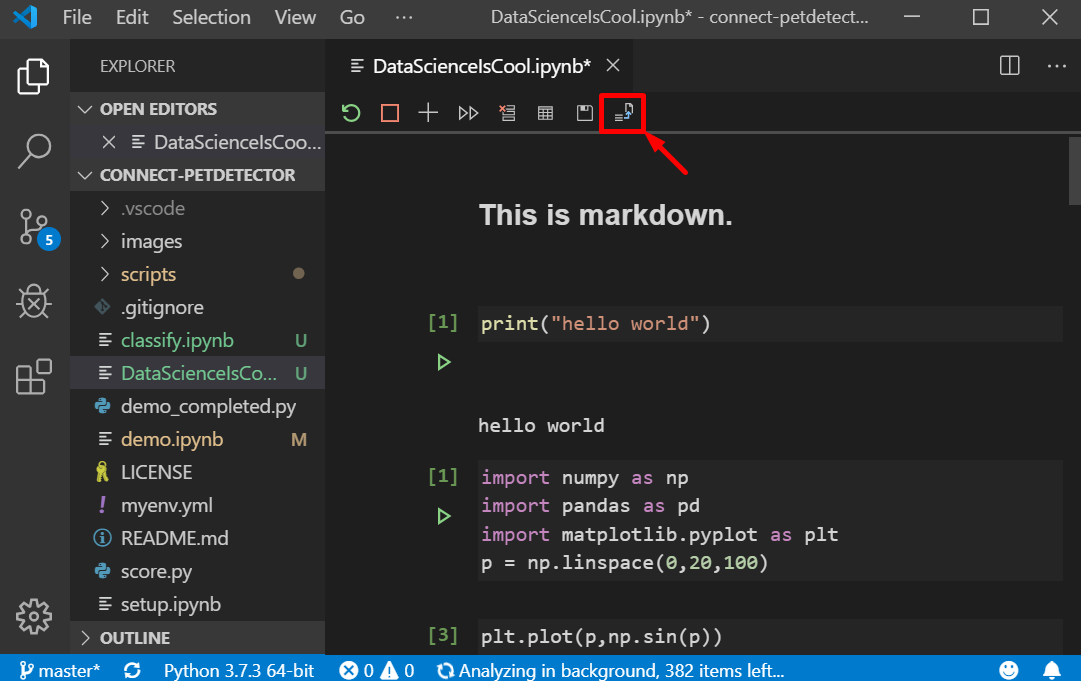

Using Pyspark inside your Jupyter Notebooks

To test whether Pyspark is running as it is supposed to, put the following code into a new notebook and run it:

(You might need to install

numpy inside your pipenv environment if you haven’t already done so without my instruction ?)

If you get an error along the lines of

sc is not defined, you need to add sc = SparkContext.getOrCreate() at the top of the cell. If things are still not working, make sure you followed the installation instructions closely.Still no luck? Send me an email if you want (I most definitely can’t guarantee that I know how to fix your problem), particularly if you find a bug and figure out how to make it work! RelatedComments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2020

Categories |

- Blog

- Home

- Handbrake Mac 32 Bit Download

- Download Trade Interceptor On Mac

- Ragdoll Masters Mac Full Download

- Twonky Media Server Download Mac

- Junos Vpn Client Download Mac

- Download Internet Browser For Mac

- Netflix Silverlight Download Mac Problem

- Download Chemdraw Free Trial Mac

- Skim Mac Os X Download

- Scan Download For Malware Mac

- How Download Chrome On Mac

- Tempat Download Aplikasi Mac Os

- Download Dropbox For Mac Chrome

- Password Corral For Mac Download

- Download Gopro Hero 4 Mac

- Instagram Download For Pc Mac

- Facade Download Mac Os Sierra

- Astro File Manager Download Mac

RSS Feed

RSS Feed